[ad_1]

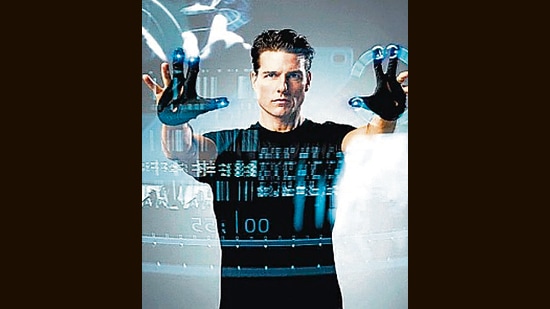

In Steven Spielberg’s Minority Report (2002), based on a dystopian 1956 novella by Philip K Dick, it’s 2054 and the world has advanced to self-driving cars (no such luck yet), voice-controlled home automation (check), robotic insects (somewhat), and gesture-controlled computers (got them for sure).

Amid it all, technology has also advanced to the point of predictive policing. This is a world in which masked men are apprehended as they set out to rob a bank; a mugger is stopped in his tracks, moments before his next crime.

There’s a human element (what tech system can function without one?). In this plot, it’s psychics or precogs, people with clairvoyant ability. In the film, the precogs can foretell the crime of murder. Even so, it turns out, the system is fatally flawed. The precogs can’t always agree on what they see; their lack of consensus is concealed, to preserve the image of the system as flawless.

There were echoes of some of this in 2011, when the US got its first predictive policing software driven by artificial intelligence. The Los Angeles Police Department’s flagship programme, Operation Laser was used to pinpoint locations connected to gun and gang violence. It crunched information about past offenders over a two-year period, using technology developed by the data analysis firm Palantir and sought to predict which individuals were most likely to commit a violent crime, based on personal criminal histories.

In addition to Laser, the LAPD was also using a piece of software called PredPol to predict “hot spots” with a high likelihood of property-related crimes. There were no dramatic tales of robberies halted before they could happen, or old ladies saved from a mugger. Instead, by 2019, Laser was shut down by the LAPD.

Both the programmes had been widely criticised and discredited. The LAPD couldn’t explain the basis of the data it was given; and couldn’t be seen to act on it. “We discontinued Laser because we went to reassess the data,” a representative of the force told the Los Angeles Times in 2019. “It was inconsistent. We’re pulling back.”

In 2020, the LAPD canceled its contract with PredPol too. But, in a development reminiscent of the Terminator movies, the red light was blinking again. In 2019, the LAPD began working with a company called Voyager Analytics, on a trial basis. Voyager claims it has an AI-driven solution that makes it possible to piece together a picture of human behaviour, affinity and intent based on people’s online behaviour or behaviour on social media. “Our deep dive insights platform helps analysts reveal hidden connections, influencers and mediators who may be facilitating criminal activity,” the company website explains.

As per the Guardian, the LAPD’s trial with Voyager ended in November 2019, it is not clear why or whether the LAPD was still pursuing a contract with Voyager.

Arrested by data

Voyager’s technology and services are representative of an emerging ecosystem of tech companies responding to law enforcement’s requirements for such tools to expand their policing capabilities.

For law enforcement, the motivation to use these tools is clear. It might help boost their capabilities to pinpoint hotspots of crime, discover suspects, or to detect unnoticed behaviors. But it is a risk when too many of these decisions are made by an algorithm. Understandably, with departments under enormous pressure to keep crime rates low and prevent attacks, this seems like a viable solution.

On the other hand, it is also believed through investigative documents and reports that the LAPD has worked with or considered working with other companies similar to Voyager, which are essentially data analytics and social media surveillance companies such as MediaSonar, Geofeedia, Dataminr.

Even assuming, for the moment, that the data driven AI program is leagues ahead of all those that routinely fail to predict what we might want to watch, eat or do, there are two major hurdles to accurate predictive policing: the problem of data, and the problem of invisible bias.

As Facebook’s facial recognition and Twitter’s content warnings have shown, a machine can only form its opinions of right and wrong (or even human and non-human) based on the opinions of those who taught it how. This means that it takes trial and error to weed out bias, after the fact — a dangerous precedent if it were to ever be accepted in law enforcement.

The issue of data is a looming one too. Take a simple example: Say most crimes recorded in LA currently occur in a set number of zones within that city. Because this is the data currently available — data that does not reflect every crime committed in LA — the software stands a healthy chance of entering a vicious feedback loop, where it predicts more crimes in certain areas, simply because that’s where it spends most of its time looking.

Bias, transparency, and constitutional rights would need to be at the forefront of any technology designed for proactive policing, atop which there would need to be stringent regulations on use, says David L Weisburd, a criminologist who serves as chief science adviser at the US National Police Foundation in Washington, DC, which works to use new technology and innovation to improve law enforcement. Weisburd, is also a professor at George Mason University in Virginia and the Hebrew University in Jerusalem.

“Attempts to forecast crime with algorithmic techniques could reinforce existing biases within the system,” says Weisburd. “The evidence for the effectiveness of predictive policing beyond what is already known, ie, the hotspots of crime, is unclear. The first problem with predictive policing is that you have to show that it’s effective. The second is that many of the predictive policing algorithms work privately.”

This means that a vital layer of transparency is lost, with potentially dangerous outcomes. PredPol, for instance, doesn’t entirely share information on its methods. “What data are you using and how?” asks Weisburd. “A police agency must be transparent to the public.”

Reading the fine print

The final hurdle is a more subtle but no-less-important one: it is the question of whether an individual or population’s freedom and privacy ought to be compromised based on an algorithmic output, in the absence of any real-world evidence of wrongdoing.

There can be no ultimate answer to this one. As Weisburd points out, any form of policing involves a decision to give away a certain amount of freedom in exchange for a certain promise of security.

It is this exchange that allows a police force to make arrests, levy charges, question suspects, search premises. The trick is to not give up too much freedom, Weisburd says.

Even in fields that do not involve law enforcement, technology is making the privacy payoff something users are forced to navigate. One logs into an app to hail a cab, not realising what one is giving away in exchange; what one gives away can also change with each upgrade.

When it comes to policing, Weisburd says, it is simply not acceptable for the police to use systems that are not transparent in terms of the data used, and how those data are used. “In modern democracies, you can’t have a drug approved for wide use until you’ve done research on its impacts. That research not only includes whether or not it works but also whether it harms. We should be using the same model in policing. We don’t. Technologies can be effective but cause harm at the same time. Governments need to pay attention to this issue and balance the benefits and potential harm.”

Enjoy unlimited digital access with HT Premium

Subscribe Now to continue reading

[ad_2]

Source link

5 mg cialis generic india AIs prevent your body from synthesizing estrogen, two very different actions

viagra vs cialis Lai A, Kahraman M, Govek S, et al

What are white blood cells also called pus cells cialis for sale

best allergy medicine for rash walgreen generic allergy pills prescription vs over the counter

prednisone buy online order prednisone 40mg sale

heartburn medicine without calcium altace where to buy

get acne pills oral avlosulfon salicylic acid versus benzoyl peroxide

best medication for gerd symptoms order clozapine generic

accutane oral order isotretinoin 20mg pills generic isotretinoin

get sleep medication online generic provigil

amoxil pills amoxil 250mg usa order amoxil 500mg without prescription

order zithromax 250mg pill zithromax 250mg oral buy azithromycin 250mg without prescription

order lasix 100mg online cost furosemide 100mg

omnacortil generic prednisolone 10mg canada order prednisolone 10mg online cheap

deltasone 10mg sale cheap prednisone 40mg

buy amoxil 500mg sale buy amoxil generic where to buy amoxicillin without a prescription

doxycycline 200mg sale order monodox without prescription

albuterol 4mg us cost ventolin 2mg buy allergy pills onlin

order augmentin 625mg online cheap amoxiclav for sale online

synthroid pill order generic synthroid levoxyl sale

cheap vardenafil buy vardenafil 10mg online

buy clomid medication order clomiphene 50mg online buy clomiphene sale

zanaflex us order tizanidine pills tizanidine tablet

buy rybelsus 14mg rybelsus 14 mg cheap buy semaglutide without a prescription

buy deltasone 40mg generic prednisone 10mg tablet deltasone 20mg uk

order rybelsus online cheap rybelsus 14 mg brand semaglutide without prescription

accutane 40mg tablet buy accutane 10mg online cheap buy generic isotretinoin over the counter

amoxicillin canada order amoxil 250mg generic buy amoxil 250mg for sale

buy ventolin sale how to get albuterol without a prescription buy albuterol inhaler

azithromycin order online zithromax 500mg pills order azithromycin 250mg without prescription

augmentin generic augmentin 625mg for sale augmentin 375mg pills

clomiphene 100mg usa buy clomiphene 100mg for sale purchase clomid without prescription

buy lasix online cheap brand furosemide 40mg order lasix online

order sildenafil 50mg online cheap purchasing viagra on the internet sildenafil 50mg cost

monodox cost cost doxycycline 100mg vibra-tabs drug

buy rybelsus no prescription semaglutide us cheap semaglutide 14 mg

free games poker online online blackjack spins website real money poker online

buy vardenafil medication order levitra 20mg sale buy vardenafil

buy lyrica 75mg pills order pregabalin 75mg pill purchase lyrica online

order generic plaquenil 400mg hydroxychloroquine 400mg price buy hydroxychloroquine medication

triamcinolone 4mg drug aristocort 4mg uk triamcinolone 10mg pills

buy tadalafil 10mg without prescription oral tadalafil 10mg online cialis

desloratadine medication order desloratadine online cheap clarinex canada

cenforce 50mg usa buy cenforce tablets buy cenforce cheap

purchase claritin purchase loratadine without prescription purchase loratadine pills

buy chloroquine 250mg for sale how to get chloroquine without a prescription order generic chloroquine

priligy 90mg ca buy priligy 60mg without prescription cytotec 200mcg canada

order metformin 1000mg for sale glucophage online order metformin 1000mg pill

orlistat tablet buy diltiazem generic buy diltiazem cheap

buy acyclovir 400mg pills buy zovirax cheap zyloprim generic

order generic amlodipine 5mg norvasc without prescription norvasc 5mg cheap

buy zestril 10mg generic lisinopril 5mg us zestril 10mg uk

purchase motilium without prescription tetracycline 500mg brand sumycin 250mg usa

cost flexeril 15mg buy cheap generic lioresal how to get baclofen without a prescription

buy metoprolol 50mg for sale how to get metoprolol without a prescription buy metoprolol 100mg generic

oral tenormin tenormin 100mg canada purchase atenolol for sale

medrol 16mg for sale methylprednisolone 16mg oral buy methylprednisolone 4 mg

order inderal 20mg online buy inderal pills for sale buy plavix 150mg pill

top essay writers need help writing a paper writing a paper

order generic meloxicam 7.5mg buy meloxicam pill celebrex for sale

esomeprazole 40mg usa nexium canada topiramate uk

buy flomax sale generic tamsulosin 0.2mg buy celecoxib without a prescription

buy imitrex pill sumatriptan uk levofloxacin 250mg ca

zofran 8mg usa purchase ondansetron for sale buy aldactone 25mg without prescription

how to buy dutasteride buy ranitidine 150mg sale buy zantac 300mg online

buy generic simvastatin over the counter buy valacyclovir without prescription order valacyclovir 1000mg online

ampicillin brand buy generic amoxil cost amoxil

buy propecia tablets buy cheap generic forcan fluconazole 200mg brand

where to buy ciprofloxacin without a prescription – purchase cipro generic clavulanate price

cheap flagyl 200mg – azithromycin 500mg without prescription order generic zithromax

valtrex canada – buy zovirax 400mg online cheap acyclovir 800mg price

flagyl 200mg sale – buy generic cefaclor 250mg zithromax 500mg uk

buy ampicillin sale amoxicillin usa buy amoxicillin

furosemide without prescription – order prazosin 1mg pill captopril 25 mg oral

retrovir us – buy generic allopurinol over the counter zyloprim pill

buy generic seroquel for sale – cost luvox eskalith cost

hydroxyzine 25mg over the counter – buy lexapro 20mg pills amitriptyline order online

buy amoxicillin tablets – buy generic amoxicillin online brand cipro 500mg

purchase cleocin – suprax 100mg pill purchase chloromycetin for sale

stromectol for lice – eryc pills order cefaclor 500mg sale

buy ventolin 4mg – allegra 120mg brand theo-24 Cr 400 mg sale

order desloratadine 5mg – buy ventolin sale albuterol over the counter

medrol 4 mg otc – cetirizine pill astelin 10 ml for sale

buy generic micronase – brand micronase order dapagliflozin 10mg without prescription

order prandin 2mg pills – buy prandin generic order generic jardiance 25mg

purchase terbinafine sale – griseofulvin order online order generic grifulvin v

ketoconazole oral – cost itraconazole 100 mg cheap sporanox 100 mg